Illegal online content and liability of Internet intermediaries: Why the messengers should not be shot

Author: Stephanie Borg Psaila

There are approximately 550 million websites on the Internet; around 60 hours of video are uploaded every minute on YouTube; around 140 million tweets per day, most of them containing links, are posted on Twitter.

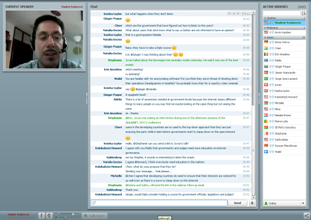

Illegal online content and liability of Internet intermediaries was the subject of last week’s Internet governance (IG) webinar, hosted by Diplo’s Vladimir Radunovic. Members of the wider IG community worldwide attended the webinar.

The debate on Internet liability is not new, but has been exacerbated by the recent ACTA/SOPA/PIPA discussions. These discussions have shed a strong light on intellectual property rights (IPR), privacy issues, content control, and liability of intermediaries.

In last week’s webinar, Vladimir discussed the blurred role between the two broad categories of intermediaries – ISPs and content providers – and the various types of questionable content which some governments have attempted to control, including ius cogens content, culturally sensitive content, and politically and ideologically sensitive content.

‘Conceptually sensitive’ content is a recent challenge: the older generation holds copyrighted content sacred; the younger generation is much more immersed in the culture of sharing, which has led to the popularisation of peer-to-peer and social media sharing, and therefore a lower level of regard for copyright issues. This may require a rethinking of the concept of IPR in light of current trends.

Vladimir discussed the main reasons for ‘shooting the messenger’. Internet intermediaries constitute a single point of control. They are in a very good position of controlling traffic which goes through their doors. In governments’ eyes, holding intermediaries liable is a simple way of solving the problem.

Yet, Vladimir explained how the current approaches to content control – including domain name system (DNS) filtering, IP filtering, deep package inspection (DPI), self-censorship, and user-data disclosure – are not adequate, and give rise to many challenges. For example, it is simply not feasible for companies like Twitter and Facebook to go through the huge amount of content shared on a daily basis.

New models are therefore required: reaching common understanding among stakeholders, which can be a challenge in itself; drafting clear regulations that do not stifle innovation; and establishing clear procedures on how questionable content should be treated.

This is a brief overview of what was discussed. During the webinar, Vladimir went into more detail, discussing theory in the light of practical examples, and discussing the issues with those who attended the webinar. The webinar’s podcast is available here; the webinar’s PPT is available here.